An IPv6 Journey Two Decades in the Making [Archived]

OUT OF DATE?

Here in the Vault, information is published in its final form and then not changed or updated. As a result, some content, specifically links to other pages and other references, may be out-of-date or no longer available.

Virginia Tech IPv6 Case Study

I’ve been at Virginia Tech since 1984, and I’ve seen a lot of technology come and go over the years, but one thing that is not going away is IPv6. Virginia Tech became interested in IPv6 very early on in the game and has made considerable progress since we started working on IPv6 more than two decades ago. We wanted to make our university reachable by the whole Internet, and employ forward thinking. In my opinion, continuing to put your resources into keeping the old IPv4 could be better utilized by figuring how to do IPv6 with your applications.

Virginia Tech’s IPv6 Timeline

In a nutshell, Virginia Tech has been routing IPv6 since 1997, and it all grew organically out of the Electrical Engineering Department. Given how long I’ve been at Virginia Tech, I have a lot of institutional knowledge about the networks there, so I’d like to share with you the journey we’ve taken.

The early days

As I mentioned, Virginia Tech began work on IPv6 early on. This was primarily due to a PhD candidate in our Electrical Engineering (EE) Department – David Lee. When RFC1897 on IPv6 testing address allocations for the 6BONE network came out, we received an allocation, and he collaborated with Phil Benchoff, to run IPv6 natively from between hosts in the EE department, operating with RFC1897 prefix 5F05:2000:: /32. DNS zone ipv6.vt.edu was delegated to ocarina.ee.ipv6.vt.edu, and working with operating systems that were capable of doing IPv6 back then, Phil downloaded and installed an IPv6 kit for Digital Unix. In 1997, the RIPE NCC updated our routing record entry to have our Communication Network Services (CNS as our department was called back then) record for inbound 6BONE making us officially documented IPv6 users.

Building a parallel backbone

At the beginning, we had early experimental code from Cisco that was capable of routing IPv6 that we could play with, but we found that code did not allow us to do IPv6 on our core backbone as we wanted to. Given that we were running an asynchronous transfer mode (ATM) network (that was the faster network back then), we found that we could not run the code on our 6509 platforms in our core to do that routing, so we built a parallel backbone just for IPv6 using much less expensive 7300 routers. We installed those in all the major switching centers where our core backbone was located, and the parallel network routed IPv6 across our core. At that time, there weren’t a lot of people using IPv6 and some operating systems had not made it to that point yet.

After we deployed the 7300 parallel backbone we began to find users were able to enable IPv6, and we had published information on our various listservs including one for IPv6. We used those to raise awareness and ask if there was more interest in IPv6. Eventually we began to get buy in, so we enabled locations on IPv6 over this parallel core network. By 2004 we had approximately 400 active users on the network. By the end of that year, we felt comfortable enough to route IPv6 at our border on the 6905 platform. We were still not doing LAN emulation yet, but things were coming along. A new RFC came out to replace the test allocation in 1998, so we got a new allocation. With that we had to change addresses, renumber things, and reconfigure our routing. We had to renumber again with the new test allocation, when on 6/6/06 the whole old 6BONE network was decommissioned, with addresses from the newer RFC 2471 IPv6 Testing Address Allocation - Replaces RFC-1897 addresses.

Going all in

In 2008, we went whole hog on IPv6. We felt good about the code Cisco had, we began to route IPv6 at border and have tunnels to our providers. By then, the code could do IPv6 with LAN emulation at the ATM layer in the 6509 platforms in our core routing and switching areas. Along the way, we helped our vendors build their code by finding bugs and fixing it. That year, we moved a lot of stuff over to IPv6. We had our first native IPv6 alarms in our pre-production IPv6 network monitoring system meaning we could now monitor IPv6 status and receive alarms for IPv6. We moved off our parallel backbone to our regular core routers once we had disabled the last of the 7300 routers and turned IS- IS routing off. We began to use OSPFv3 as our Interior Gateway Protocol (IGP) all over for our IPv6 networks.

We also cut over the rest of our academic networks, residential networks, and our 802.1x wireless networks. We gave our NTP servers AAAA records and created a peering point with the New River Valley Mall which is just off campus for being able to peer with local providers that were IPv6 capable. We did this so that local traffic could get to us without going through the Internet somewhere else.

A big transition point

By the following year, we saw a lot of IPv6 traffic on campus. It was obvious people were using IPv6 given operating systems like Windows had IPv6-enabled out of the box. In 2009, Google enabled IPv6 for our primary DNS resolvers, and once that happened, outside entities saw us as a huge IPv6 user. By 9 September, research released by Google showed 51% of hosts on the Virginia Tech network had working IPv6. The same research showed only seven ASNs accounted for a 1% or larger portion of Google’s IPv6 traffic, of which Virginia Tech produced 2% of the total IPv6 hits in the research. At the time, we were producing more IPv6 than anybody, and this was essentially when we became visible to the world over IPv6.

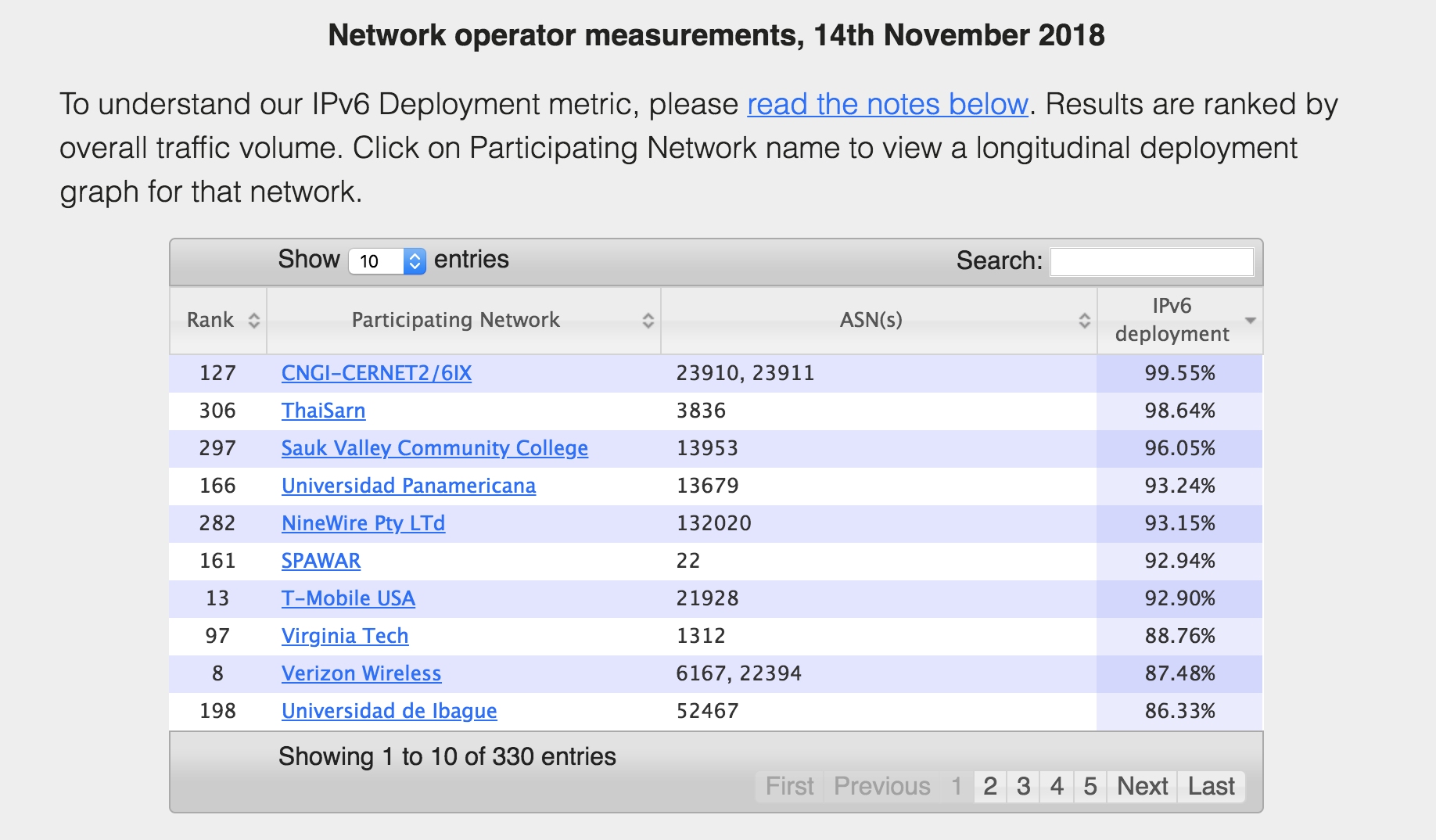

Then in 2011, the IANA free pool of IPv4 ran out, during which time we started peering with lots of other providers that did IPv6. When RFC 6540 / BCP-177 came out in 2012, IPv6 support was required for all IP capable nodes, so there were less excuses not to do IPv6 by then. Measurements from the World IPv6 Launch in 2012 revealed Virginia Tech was leading the network operator category with 59.1% of our nodes supporting IPv6 which then increased to 64.03% only a few months later. One thing we were tickled about was enabling support for IPv6 across our web, mail SPF, DNS, NTP, and XMPP to get all green boxes across the mrp.net measurements by March 2016. Today, as of 14 November 2018, World IPv6 Launch Measurements show Virginia Tech as number eight with almost 89% IPv6-capable and deployed.

It goes to show we still have 11% to go and we’ve been working on it since 1997. It’s not all going to happen in a day, and we still have some things to work on.

My advice for you

It’s very important to get your network engineers, architects, application administrators, and even administrative folks on board early on. Everybody needs to understand what it is you are trying to do and why. You don’t want to get into a situation where people are saying those network guys broke us when they turned on IPv6. Get everybody on the same page early on.

You will definitely encounter resistance, but if you’re a network engineer you should be used to that, because you’ve encountered resistance the whole time you’ve been networking (ever have anyone ask you what you need a maintenance window for?) It comes with the territory. There will be excuses. There will be people who say NAT is great, don’t change, but that’s backward thinking, not forward thinking. Deploying IPv6 is not that bad, nor is it scary.

Other items you will want to consider:

-

Vendor compatibility with all forms of network technology and applications you plan to use – Existing equipment as well as future purchases, consider IPv6 functionality, AND perform Proof of Concept test either in your own lab or in the vendors to satisfy your environment requirements before committing to a technology or vendor

-

Adding public facing applications to your IPv6 transition plan early on – Web servers or other public facing applications can increase Internet visibility by adding IPv6 reachability. Get network engineers and application administrators collaborating early in the process.

-

Hardware and software purchasing options – Try to prefer, if not demand, full IPv6 functionality before making purchases. Discuss options with your purchasing agents. Develop strategic purchasing plans to support IPv6 enabled hardware and applications.

-

Your number plan – Same as with legacy IP, determine ways you may want to segment your network to optimize address usage as well as consider traffic flow and patterns to optimize traffic flows.

-

Network technicians and engineers need training for IPv6 – Assuming that legacy IP works the same as IPv6 is a mistake.

-

Architecture – where applications sit in the network that will implement IPv6, load balancing, server configuration, Hosts Lists, Access Lists (ACLs), routing, switching, Firewall, DNS, DHCPv6. Consider what troubleshooting and monitoring tools that support IPv6 are necessary. Baselining IPv6 network flow data and capturing IPv6 packets for inspection can lead to a better understanding of packet structure and natural IPv6 traffic patterns across your network.

Final words

Along the way, we saw performance improvements when we turned on IPv6. It was faster to reach many of the sites people wanted to go to like Google (because it was IPv6-enabled). Yes, there were some issues early on with browsers going slower, due to happy eyeballs failing over slowly, but we were able to overcome those problems pretty quickly. Deploying IPv6 gave Virginia Tech visibility, in articles , and otherwise, because we were early adopters. That helped us push our vendors to support IPv6 as well.

I want to leave you with the point that if everyone assumes IPv4 works exactly like IPv6, that’s a mistake. You need some training, even if it’s self-training. Get your network engineers and applications people up to speed on what it means to switch to a 128 bit address versus a 32 bit address. You want to be visible to all the Internet. You want to have an IPv6 presence. You want to be reachable by the whole Internet.

Any views, positions, statements or opinions of a guest blog post are those of the author alone and do not represent those of ARIN. ARIN does not guarantee the accuracy, completeness or validity of any claims or statements, nor shall ARIN be liable for any representations, omissions or errors contained in a guest blog post.

OUT OF DATE?

Here in the Vault, information is published in its final form and then not changed or updated. As a result, some content, specifically links to other pages and other references, may be out-of-date or no longer available.